We don’t want to allocate too much space for our log files. Let’s see how we can manage their livespan.

What we are going to build

In this example we are going to work with the project introduced in the Spring Boot Log4j 2 advanced configuration #1 – saving logs to files post and available in the spring-boot-log4j-2-scaffolding repository. The project uses a console and a file appenders associated with the root logger. I’m going to introduce the rollover policy which will ensure that the file content won’t be bloated.

Example configuration

In the project repository you’ll find the complete config file. Below I included only the fragments relevant to applying the RollingFile appender:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 |

# src/main/resources/log4j2-spring.yaml Configuration: properties: property: … - name: FILE_PATTERN value: ${LOG_DIR}/$${date:yyyy-MM}/%d{yyyy-MM-dd-HH} appenders: … RollingFile: - name: AllFile filePattern: ${filePattern}-all-%i.log.gz fileName: ${LOG_DIR}/all.log PatternLayout: Pattern: ${FILE_LOG_PATTERN} policies: CronTriggeringPolicy: schedule: "0 0 * * * ?" SizeBasedTriggeringPolicy: size: 20MB DefaultRolloverStrategy: max: 10 Loggers: Root: level: info AppenderRef: - ref: AllFile … |

The difference between the File and RollingFile appenders is that the latter rolls the files over according to the given policies. The appender name, fileName and PatternLayout properties are the same as in the example explained in the Spring Boot Log4j 2 advanced configuration #1 – saving logs to files post. In the following sections we’re going to focus on the attributes specific for the RollingFile appender.

When to trigger a rollover

We need to decide under what condition a file rollover should occur.

policies

A file rollover can be triggered by any requirement listed in the policies sections in our configuration. Log4j 2 calls it the Composite Triggering Policy. Below you will find short description of policies configured in our example.

CronTriggeringPolicy

You can use this policy if the filePattern property of the appender contains a timestamp. If not, the content of the target file (all.log) is going to be changed on every rollover. The schedule accepts a cron expression. In the following snippet you can see that I want to trigger a rollover every hour:

|

1 2 3 4 5 6 |

# src/main/resources/log4j2-spring.yaml … policies: CronTriggeringPolicy: schedule: "0 0 * * * ?" … |

SizeBasedTriggeringPolicy

According to this policy, the rollover is going to be triggered every time the file reaches the specified limit. In the example config a new archived log file is going to be created every time the target file reaches 20MB:

|

1 2 3 4 5 6 |

# src/main/resources/log4j2-spring.yaml policies: … SizeBasedTriggeringPolicy: size: 20MB … |

If the filePattern contains a timestamp, it will be updated on every rollover triggered by this policy, except when you combine it with a time based policy.

Combining size and time based policies

When you use the size and time based policies together (when the Default Rollover Strategy is applied):

- the

timestampin file names will be updated only when the particular rollover was triggered by a policy based on time; - the

%icounter in file names will be updated only when the particular rollover was triggered by the SizeBased Policy.

Look at the following config with new schedule (every second), size (every 500B) and filePattern (includes seconds) properties:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 |

# src/main/resources/log4j2-spring.yaml Configuration: properties: property: - name: ARCHIVED_FILE_PATTERN value: ${LOG_DIR}/$${date:yyyy-MM}/%d{yyyy-MM-dd-HH:mm:ss} appenders: … RollingFile: … filePattern: ${ARCHIVED_FILE_PATTERN}-all-%i.log.gz … policies: CronTriggeringPolicy: schedule: "* * * * * ?" SizeBasedTriggeringPolicy: size: 500B … |

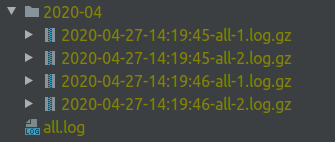

It will produce a list of log files that can look like this:

The first and second rollover were triggered by the SizeBased Policy; the third by the CronTriggering Policy. The fourth was triggered by the SizeBased Policy and the timestamp from the preceding file was used.

How a rollover should be executed

The exact details of a rollover depend on a specified rollover strategy. If you didn’t provide the strategy, the Default Rollover Strategy is applied. In case you didn’t configure a fileName as well, the DirectWriteRolloverStrategy will be used (since version 2.8).

Default Rollover Strategy

This policy is used in the example config. It updates the timestamp and the %i counter in file names, and compresses the archive files using the gzip format. You can replace the .gz extension in the filePattern property with the .zip, .bz2, .deflate, .pack200, or .xz and the resulting archive will be compressed with the scheme that corresponds to the suffix. For zip files you can even specify the compressionLevel parameter.

The default maximum amount of files is 7. Once it’s reached, older files are removed so be sure to adjust it to your needs. For example, I can set it to 3:

|

1 2 3 4 5 6 7 |

# src/main/resources/log4j2-spring.yaml RollingFile: - name: AllFile … DefaultRolloverStrategy: max: 3 … |

In consequence, during the fourth rollover the all-1.log.gz file will be removed, the following archived files will be renamed by decreasing their counter (e.g all-2.log.gz will become all-1.log.gz) and the all.log file will be archived as the 3.log.gz file. Remember that by default files with a higher index are newer than files with a smaller index. If you want to change that set the fileIndex property to min.

When you are combining the SizeBased Triggering Policy with a time based policy, you can end up with more than just 3 files. The rollover triggered by the CronTriggering policy won’t increment the counter. The max property in our config is related to the %i counter value in the filePattern not the total amount of files.

You can gain more granular control over deleting log files by using Log Archive Retention Policy.

filePattern

While the current log file (containing the latest entries) is named after the fileName value, the archived files require their own pattern. The result is closely related to the applied RolloverPolicy. In this example we’re going to use the DefaultRolloverStrategy. Keep in mind that choosing a different policy (e.g. DirectWrite Rollover Strategy) require research on which patterns are compatible or whether file renaming is performed.

The pattern applied to log files in our example has the following structure:

|

1 |

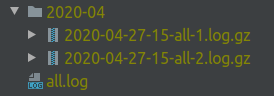

${LOG_DIR}/$${date:yyyy-MM}/%d{yyyy-MM-dd-HH}-all-%i.log.gz |

Which gives us the structure like on the image below:

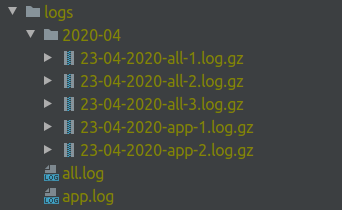

Design the pattern that can accommodate future appenders. Adding a second logger with its own RollingFile appender using the /$${date:yyyy-MM}/%d{dd-MM-yyyy} naming convention may look like on the snippet below:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 |

# src/main/resources/log4j2-spring.yaml Configuration: properties: property: … - name: ARCHIVED_FILE_PATTERN value: ${LOG_DIR}/$${date:yyyy-MM}/%d{dd-MM-yyyy} appenders: … - name: KeepgrowingAppFile fileName: ${LOG_DIR}/app.log filePattern: ${ARCHIVED_FILE_PATTERN}-app-%i.log.gz … policies: … Loggers: logger: - name: in.keepgrowing.springbootlog4j2scaffolding level: info AppenderRef: - ref: KeepgrowingAppFile Root: … |

This configuration will produce a directory tree like the on the following screenshot:

| Take your time to choose the pattern that is the most suitable for your needs. |

The work presented in this article is contained in the commit f14792d74a815c9e8affcce19568cae0df9ffe3f.

Performance

If you are trying to improve performance while logging to files, check out the RollingRandomAccessFileAppender.

Photo by Dave Meier on StockSnap